HTR+ versus Pylaia part 2

Release 1.12.0

Some weeks ago we reported about our first experiences with PyLaia while training a generic model (600.000 words GT).

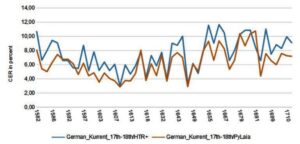

Today we want to make another attempt to compare PyLaia and HTR+. This time we have a larger model (German_Kurrent_17th-18th; 1,8 million words GT) available. The model was trained as both PyLaia and HTR+ model, with identical ground truth and the same conditions (from the scratch).

Our hypothesis that PyLaia can show its advantages over HTR+ in larger generic models has been fully confirmed. In the case shown PyLaia is superior to HTR+ in all aspects. Both with and without the Language Model, the PyLaia model scored about one percentage point (in the CER) better than HTR+ on all our test sets.

By the way, in the last weeks the performance of PyLaia for “curved” textlines has also improved significantly.